GPT 5.4: OpenAI's RAG Regression

OpenAI released GPT-5.4, alongside a Pro variant designed for extended reasoning. We added both to our LLM-for-RAG leaderboard.

GPT-5.4 is OpenAI's latest frontier model. It features a 1M+ token context window, native computer-use capabilities, and configurable reasoning depth. The Pro version uses additional compute for complex tasks, with some requests taking several minutes.

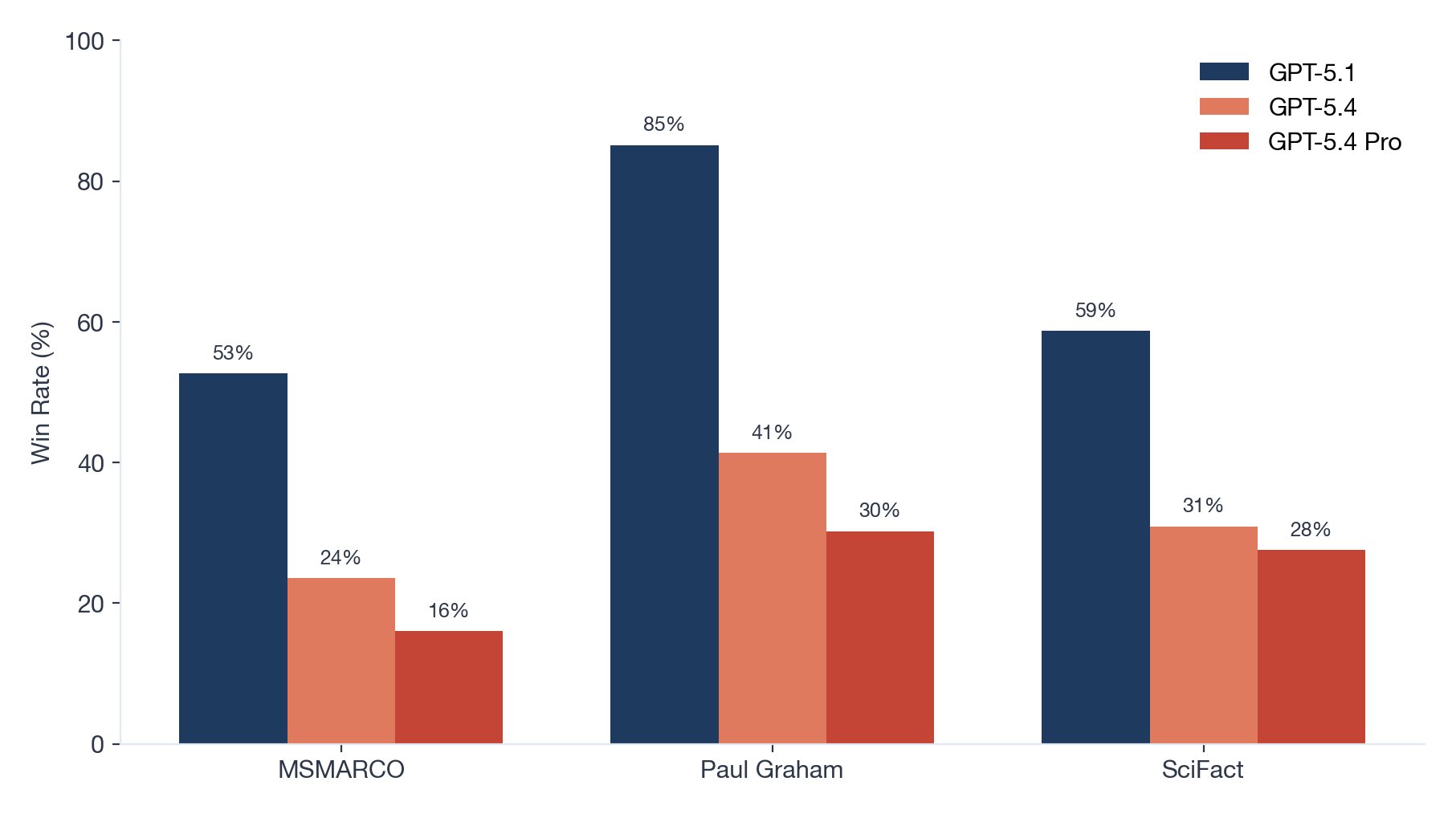

We tested both models on three RAG workloads: web-search QA (MSMARCO), essay synthesis (Paul Graham), and scientific verification (SciFact).

TL;DR

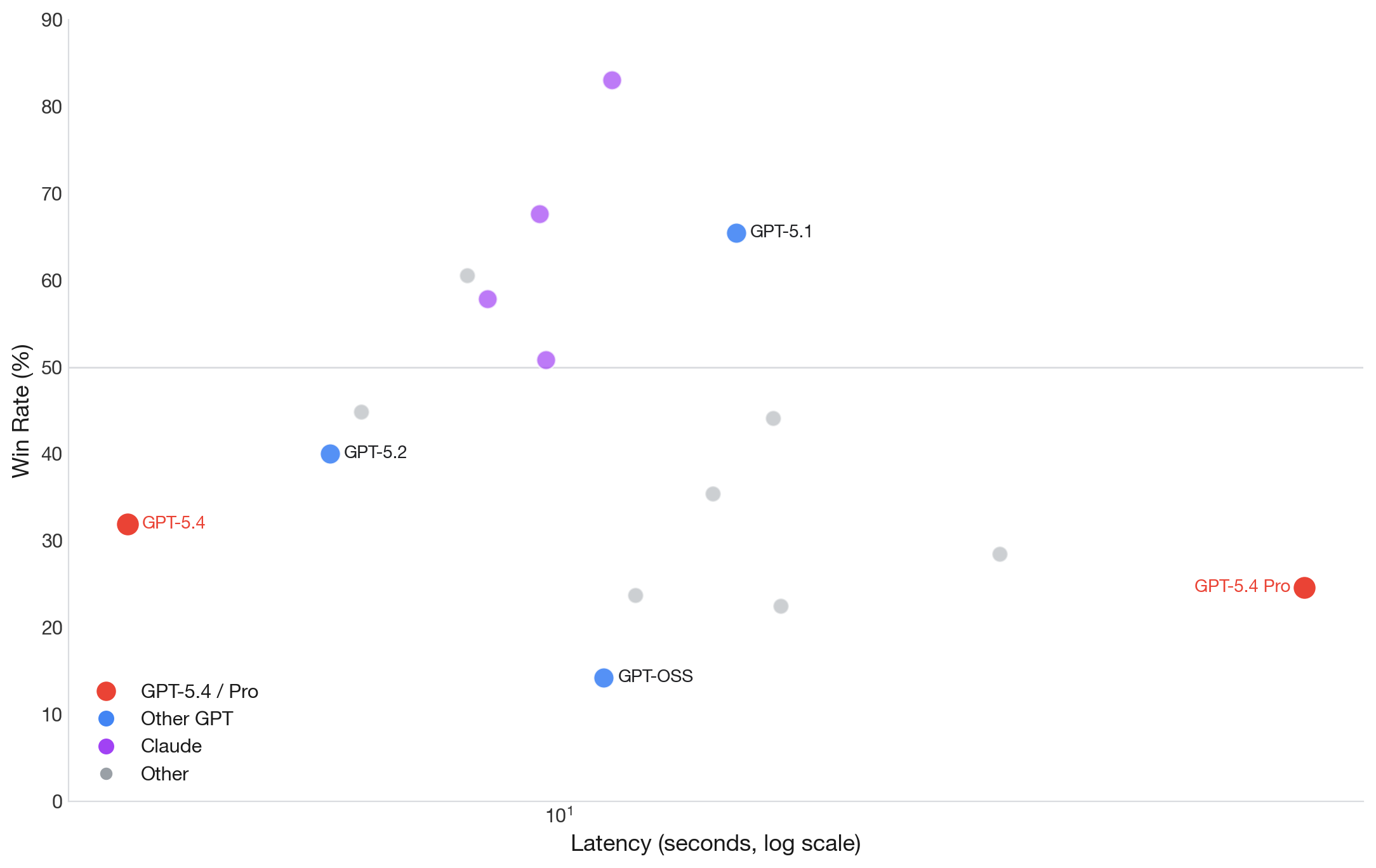

- GPT-5.4 ranks #10 overall with a 31.9% win rate.

- GPT-5.4 Pro ranks #13 with 24.6%.

- Both underperform GPT-5.1, which holds #3 at 65.5%.

- For RAG, the older model is better.

Key Findings

Regression from GPT-5.1

GPT-5.4 loses to its predecessor. In head-to-head matchups against GPT-5.1, it wins only 8% of the time, a 7-72 record across 90 comparisons.

The performance drop is consistent across all three datasets:

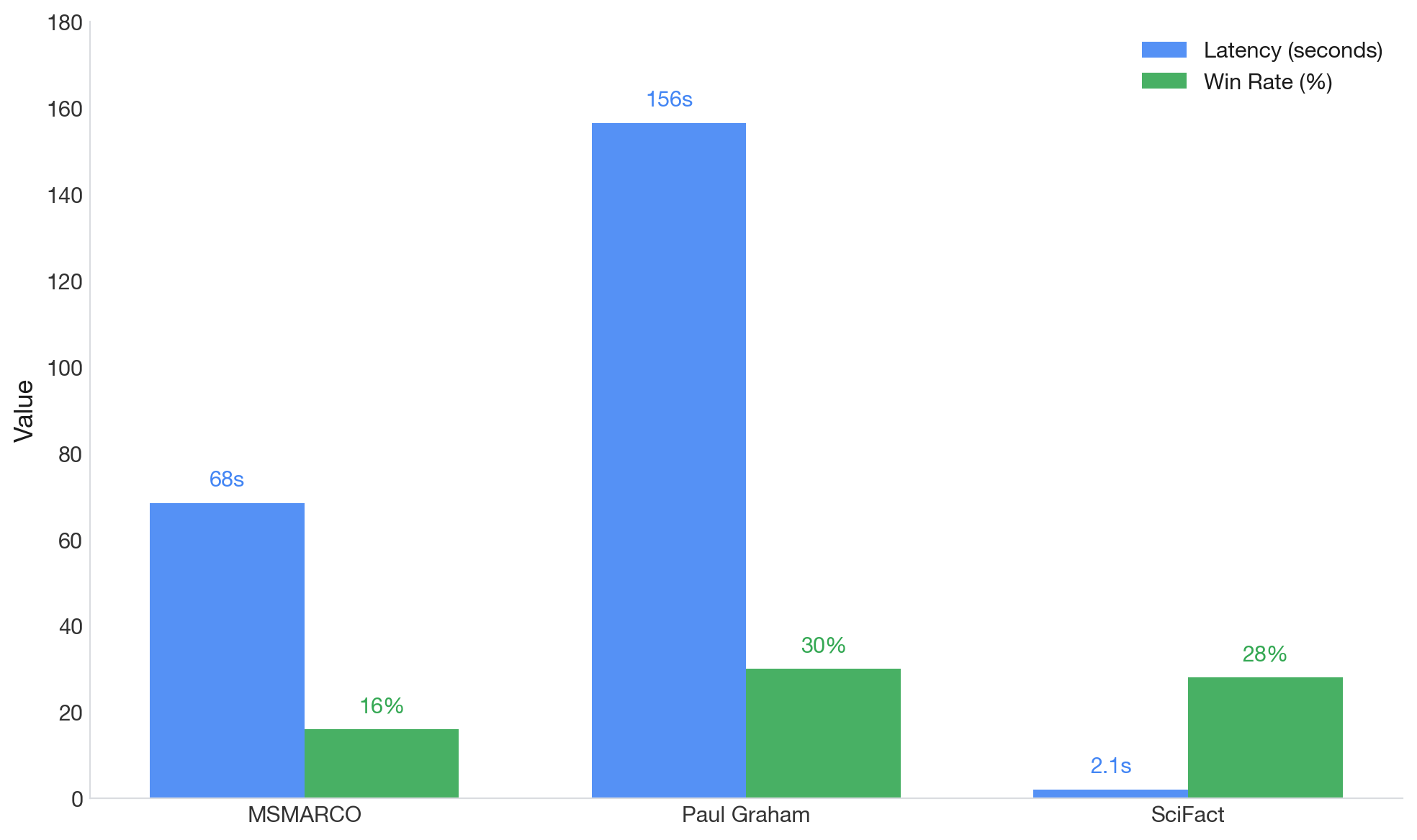

Pro tier: more latency, not more wins

GPT-5.4 Pro is designed for extended reasoning. On our benchmarks, this translates to extreme latency without quality gains.

On Paul Graham essays, GPT-5.4 Pro takes 2.6 minutes per query. GPT-5.1 takes 16 seconds and wins twice as often.

The base GPT-5.4 actually outperforms its Pro sibling: 47% win rate in direct comparison (42-17-31 record). Extended reasoning appears to hurt RAG performance by drifting away from retrieved context.

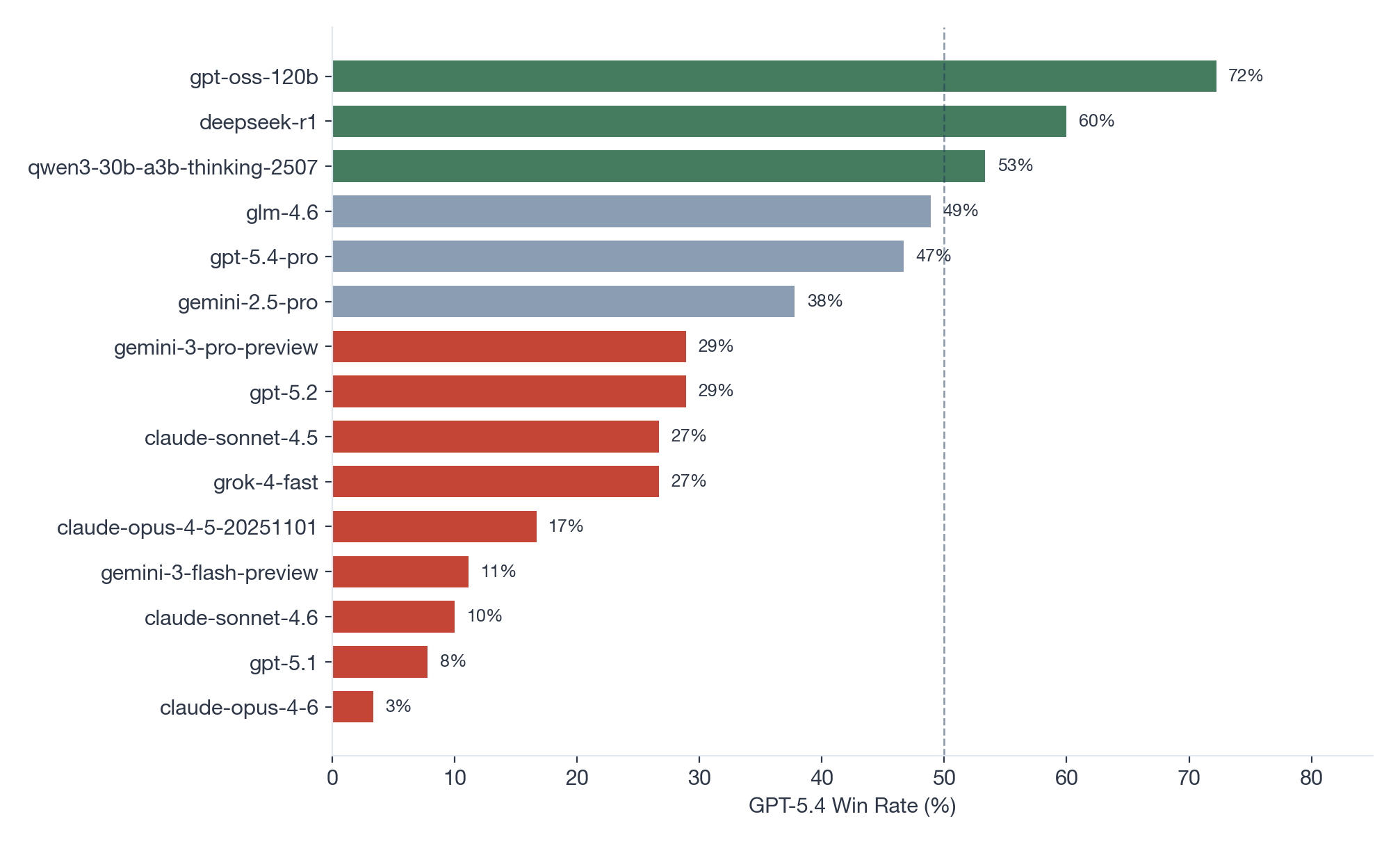

Competitive only against the bottom tier

GPT-5.4 beats three models convincingly: GPT-OSS-120B (72%), DeepSeek R1 (60%), and Qwen3-30B (53%). Against everyone else, it struggles.

The Tradeoff

GPT-5.4 is fast. At 3.1 seconds average latency, it's 5x faster than GPT-5.1 (16 seconds). If your workload prioritizes speed over quality, this matters.

But for the same latency tier, Gemini 3 Flash (60.6% win rate) outperforms GPT-5.4 (31.9%) while costing less.

But for the same latency tier, Gemini 3 Flash (60.6% win rate) outperforms GPT-5.4 (31.9%) while costing less.

Recommendation

For RAG workloads, use GPT-5.1. It remains OpenAI's strongest model for retrieval-grounded generation and ranks #3 overall.

GPT-5.4 makes sense only if you need sub-4-second latency and OpenAI specifically. Otherwise, Gemini 3 Flash offers better quality at similar speed.

GPT-5.4 Pro has no clear RAG use case. The extended reasoning doesn't improve grounding - it degrades it - while adding minutes of latency per request.

Conclusion

GPT-5.4 is optimized for computer-use and agentic workflows, not retrieval-augmented generation. The model's strengths - native tool integration, configurable reasoning - don't translate to better RAG performance.

For now, GPT-5.1 holds. We'll update the leaderboard as OpenAI iterates on 5.4.

See the full rankings on the LLM-for-RAG leaderboard.